"Workplace" by Facebook was designed to help employees collaborate with one another on products, listen to their bosses speak on Facebook Live and post updates on their work in the News Feed. It was launched globally at the Facebook Inc. offices in London on Oct. 10. (Photo by Jason Alden/Bloomberg via Getty Images)

For better or worse, America is in the midst of a silent revolution. Many of the decisions that humans once made are being handed over to mathematical formulas. With the correct algorithms, the thinking goes, computers can drive cars better than human drivers, trade stock better than Wall Street traders and deliver to us the news we want to read better than newspaper publishers.

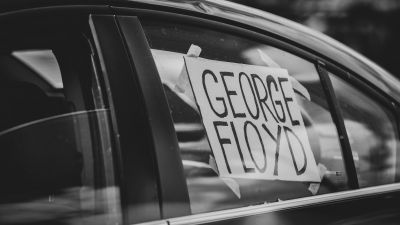

But with this handoff of responsibility comes the possibility that we are entrusting key decisions to flawed and biased formulas. In a recent book that was longlisted for the National Book Award, Cathy O’Neil, a data scientist, blogger and former hedge-fund quant, details a number of flawed algorithms to which we have given incredible power — she calls them “Weapons of Math Destruction.” We have entrusted these WMDs to make important, potentially life-altering decisions, yet in many cases, they embed human race and class biases; in other cases, they don’t function at all.

Weapons of Math Destruction, by Cathy O’Neil.

Among other examples, O’Neil examines a “value-added” model New York City used to decide which teachers to fire, even though, she writes, the algorithm was useless, functioning essentially as a random number generator, arbitrarily ending careers. She looks at models put to use by judges to assign recidivism scores to inmates that ended up having a racist inclination. And she looks at how algorithms are contributing to American partisanship, allowing political operatives to target voters with information that plays to their existing biases and fears.

A veteran of Occupy Wall Street, O’Neil has now founded a company, O’Neil Risk Consulting & Algorithmic Auditing, or ORCAA (complete with whale logo), to “audit” these privately designed secret algorithms — she calls them “black boxes” — that hold public power. “It’s the age of the algorithm,” the company’s website warns, “and we have arrived unprepared.” She hopes to put her mathematical knowledge to use for the public good, taking apart algorithms that affect people’s lives to see if they are actually as fair as their designers claim. It’s a role she hopes a government agency might someday take on, but in the meantime it falls to crusading data scientists like O’Neil.

We spoke with her recently about how America should confront these WMDs. This interview has been edited for length and clarity.

— Cathy O'Neil

John Light: What do you hope people take away from reading this book? What did you set out to do when you were writing it?

Cathy O’Neil: The most important goal I had in mind was to convince people to stop blindly trusting algorithms and assuming that they are inherently fair and objective. I wanted to prevent people from giving them too much power. I see that as a pattern. I wanted that to come to an end as soon as possible.

Light: So, for example, what’s the difference between an algorithm that makes racist decisions and a person at the head of a powerful institution or in a place of power making those racist decisions?

O’Neil: I would argue that one of the major problems with our blind trust in algorithms is that we can propagate discriminatory patterns without acknowledging any kind of intent.

Let’s look at it this way: We keep the responsibility at arm’s length. So, if you had an actual person saying, “Here’s my decision. And I made a decision and it was a systematically made decision,” then they would clearly be on the hook for how they made those decisions.

But because we have an algorithm that does it, we can point to the algorithm and say, “We’re trusting this algorithm, and therefore this algorithm is responsible for its own fairness.” It’s kind of a way of distancing ourselves from the question of responsibility.

Cathy O’Neil

Light: Most Americans may not even understand what algorithms are, and if they do, they can’t really take them apart. As a mathematician, you are part of this class of people who can access these questions. I’m curious why you think you were the one to write this book, and, also, why this isn’t a discussion that we’ve been having more broadly before now?

O’Neil: That second question is a hard one to answer, and it gets back to your first question: Why is it me? And it’s simply me because I realized that the entire society should be having this conversation. But I guess most people were not in the place where they could see it as clearly as I could. And the reason I could see so clearly is that I know how models are built, because I build them myself, so I know that I’m embedding my values into every single algorithm I create and I am projecting my agenda onto those algorithms. And it’s very explicit for me. I should also mention that a lot of the people I’ve worked with over the years don’t think about it that way.

Light: What do you mean? They don’t think about it what way?

O’Neil: I’m not quite sure, but there’s less of a connection for a lot of people between the technical decisions we make and the ethical ramifications we are responsible for.

For whatever reason — I can think about my childhood or whatever — but for whatever reason, I have never separated the technical from the ethical. When I think about whether I want to take a job, I don’t just think about whether it’s technically interesting, although I do consider that. I also consider the question of whether it’s good for the world.

So much of our society as a whole is gearing us to maximize our salary or bonus. Basically, we just think in terms of money. Or, if not money, then, if you’re in academia, it’s prestige. It’s a different kind of currency. And there’s this unmeasured dimension of all jobs, which is whether it’s improving the world. [laughs] That’s kind of a nerdy way of saying it, but it’s how I think about it.

— Cathy O'Neil

The training one receives when one becomes a technician, like a data scientist — we get trained in mathematics or computer science or statistics — is entirely separated from a discussion of ethics. And most people don’t have any association in their minds with what they do and with ethics. They think they somehow moved past the questions of morality or values or ethics, and that’s something that I’ve never imagined to be true.

Especially from my experience as a quant in a hedge fund — I naively went in there thinking that I would be making the market more efficient and then was like, oh my God, I’m part of this terrible system that is blowing up the world’s economy, and I don’t want to be a part of that.

And then, when I went into data science, I was working with people, for the most part, who didn’t have that experience in finance and who felt like because they were doing cool new technology stuff they must be doing good for the world. In fact, it’s kind of a dream. It’s a standard thing you hear from startup people — that their product is somehow improving the world. And if you follow the reasoning, you will get somewhere, and I’ll tell you where you get: You’ll get to the description of what happens to the winners under the system that they’re building.

Every system using data separates humanity into winners and losers. They’ll talk about the winners. It’s very consistent. An insurance company might say, “Tell us more about yourself so your premiums can go down.” When they say that, they’re addressing the winners, not the losers. Or a peer-to-peer lender site might say, “Tell us more about yourself so your interest rate will go down.” Again, they’re addressing the winners of that system. And that’s what we do when we work in Silicon Valley tech startups: We think about who’s going to benefit from this. That’s almost the only thing we think about.

Light: Silicon Valley hasn’t had a moment of reckoning, but the financial world has: In 2008, its work caused the global economy to fall apart. This was during the time that you were working there. Do you think that, since then, Wall Street, and the financial industry generally, has become more aware of its power and more careful about how that power is used?

— Cathy O'Neil

O’Neil: I think what’s happened is that the general public has become much more aware of the destructive power of Wall Street. The disconnect I was experiencing was that people hated Wall Street, but they loved tech. That was true when I started this book; I think it’s less true now — it’s one of the reasons the book had such a great reception. People are starting to be very skeptical of the Facebook algorithm and all kinds of data surveillance. I mean, Snowden wasn’t a thing when I started writing this book, but people felt like they were friends with Google, and they believed in the “Do No Evil” thing that Google said. They trusted Google more than they trusted the government, and I never understood that. For one reason, the NSA buys data from private companies, so the private companies are the source of all this stuff.

The point is that there are two issues. One issue is the public perception — and I thought, look, the public trusts big data way too much. We’ve learned our lesson with finance because they made a huge goddamn explosion that almost shut down the world. But the thing I realized is that there might never be an explosion on the scale of the financial crisis happening with big data. There might never be that moment when everyone says, “Oh my God, big data is awful.”

The reason there might never be that moment is that by construction, the world of big data is siloed and segmented and segregated so that successful people, like myself — technologists, well-educated white people, for the most part — benefit from big data, and it’s the people on the other side of the economic spectrum, especially people of color, who suffer from it. They suffer from it individually, at different times, at different moments. They never get a clear explanation of what actually happened to them because all these scores are secret and sometimes they don’t even know they’re being scored. So there will be no explosion where the world sits up and notices, like there was in finance. It’s a silent failure. It affects people in quiet ways that they might not even notice themselves. So how could they organize and resist?

Light: Right, and that makes it harder to create change. Everybody in Washington, DC, could stand up and say they were against the financial crisis. But with big data, it would be much harder to really get politicians involved in putting something like the Consumer Financial Protection Bureau in place, right?

O’Neil: Well, absolutely. We don’t have the smoking gun, for the most part, and that was the hardest part about writing this book, because my editor, quite rightly, wanted me to show as much evidence of harm as possible, but it’s really hard to come by. When people are not given an option by some secret scoring system, it’s very hard to complain, so they often don’t even know that they’ve been victimized.

If you think about the examples of the recidivism scores or predictive policing, it’s very, very indirect. Again, I don’t think anybody’s ever notified that they were sentenced to an extra two years because their recidivism score had been high, or notified that this beat cop happened to be in their neighborhood checking people’s pockets for pot because of a predictive policing algorithm. That’s just not how it works. So evidence of harm is hard to come by. It’s exactly what you just said: It will be hard to create the Consumer Financial Protection Bureau around this crisis.

Light: If the moderator of one of the presidential debates were to read your book, what would you hope that it would inspire him or her to ask?

O’Neil: Can I ask a loaded question?

Light: Sure.

O’Neil: You could ask, “Can you comment on the widespread usage of algorithms to replace human resources as part of the company, and what do you think the consequences will be?”

Light: Occupy Wall Street didn’t even exist in 2008 when Obama was elected, and this year, as we’re electing his successor, I think Occupy’s ideas have been a big part of the dialogue. You were a member of Occupy Wall Street — have you been struck by the ways in which they’ve contributed to the national conversation?

O’Neil: I still am a member. I facilitate a group every Sunday at Columbia. I know, for one thing, that I could not have written this book without my experiences at Occupy. Occupy provided me a lens through which to see systemic discrimination. The national conversation around white entitlement, around institutionalized racism, the Black Lives Matter movement, I think, came about in large part because of the widening and broadening of our understanding of inequality. That conversation was begun by Occupy. And it certainly affected me very deeply: Because of my experience in Occupy — and this goes right back to what I was saying before — instead of asking the question, “Who will benefit from this system I’m implementing with the data?” I started to ask the question, “What will happen to the most vulnerable?” Or “Who is going to lose under this system? How will this affect the worst-off person?” Which is a very different question from “How does this improve certain people’s lives?”

Light: Can you talk a bit about your efforts to test various algorithms that are already being used to make decisions — what you call “auditing the black box”?

O’Neil: It’s very much in its infancy. The best example I have so far is a ProPublica investigation led by Julia Angwin on the recidivism risk algorithm called the COMPAS model in Florida. It came out a couple months ago, and she did an open-source audit of the data that she found. It’s kind of ideal in a lot of ways. It’s a great archetype because it is, first of all, open-source, so that means if people don’t like her analysis they can redo it. But it’s investigating what is a black box. She has enough data about the way that black box works. She inferred that the black box has bias in various ways. Obviously the more transparency we have as auditors, the more we can get, but the main goal is to understand important characteristics about a black box algorithm without necessarily having to understand every single granular detail of the algorithm.

Light: Right, to understand the range of outcomes an algorithm can create.

— Cathy O'Neil

O’Neil: Yeah, we want to basically test it for fairness, test it for meaning. With recidivism algorithms, for example, I worry about racist outcomes. With personality tests [for hiring], I worry about filtering out people with mental health problems from jobs. And with a teacher value-added model algorithm [used in New York City to score teachers], I worry literally that it’s not meaningful. That it’s almost a random number generator.

There are lots of different ways that algorithms can go wrong, and what we have now is a system in which we assume because it’s shiny new technology with a mathematical aura that it’s perfect and it doesn’t require further vetting. Of course, we never have that assumption with other kinds of technology. We don’t let a car company just throw out a car and start driving it around without checking that the wheels are fastened on. We know that would result in death; but for some reason we have no hesitation at throwing out these algorithms untested and unmonitored even when they’re making very important life-and-death decisions.

The point is that we don’t even have a definition of safety for these algorithms. And it’s urgently needed.

Light: At the end of your book, you suggest that there is a role for government to play here. Until government steps up, who should be doing these audits and elevating these problems? Journalists like those at ProPublica?

O’Neil: Well, I think journalists are generally not paid enough to do this stuff. It’s pretty hard because it’s a combination of long investigation and technical expertise. ProPublica is perfectly set up for that. Obviously not every newsroom is.

I set up a company, an algorithmic auditing company myself. I have no clients. [laughs] But I would love clients. I’m thinking along the lines of, why doesn’t the ACLU or a teachers’ union file some Freedom of Information Act requests or use their resources to actually sue for these black box algorithms, and then I can audit them? It’s kind of a team effort because you’re going to need to have some expertise of the law on one side and then some on the technical side as well.

I think that we have the skills, so I think it’s just a question of timing. We’re going to start seeing progress in this relatively soon, and I think the low-hanging fruit are those personality tests and other kinds of algorithms that have replaced hiring processes — essentially because there are already laws on the books about what is a fair hiring practice, and because the Supreme Court upheld disparate impact as a legal argument in the last year, which is very important.

Light: In the book, you draw a comparison to the moment when the government eventually had to step in during the industrial revolution, and you argue that we’re in a similar revolution now. When it does step in, do you imagine an agency like the Consumer Financial Protection Bureau [which was set up after the financial crisis] coming into play to ultimately do this kind of work?

O’Neil: My fantasy is that there is a new regulatory body that is in charge of algorithmic auditing.

Light: One criticism of financial regulators is that bodies like the SEC are constantly two steps behind the folks who are doing the most interesting and potentially dangerous work in markets and banking. Do you see that criticism also being an issue as we attempt to keep abreast of these algorithms that are constantly developing?

O’Neil: Yes and no. At least in my book, I made an attempt to focus in on the algorithms that matter the most. I have no optimism about the idea that we’ll be able to audit all algorithms. It’s not feasible, and it’s not what we want. What we want to do is have certain classes of algorithms that need to be feasibly fair and legal. And one of those classes would be hiring algorithms. You see what I mean? Just like we have regulations in other industries, we could regulate those algorithms to have to abide by a certain kind of format so that they are easy to audit. Most algorithms will not be under regulation as far as I’m anticipating.

Light: What has been the response to the book?

O’Neil: It’s been incredible and massive. It was standing-room-only at the Harvard Book Store on Monday night. Seattle Town Hall was amazing. I went to a Silicon Valley conference for people interested in security and privacy, and those folks were extremely interested in the book. Especially because of the political season, people are highly, keenly aware of the effect that, for example, Facebook and Google have on this information flow. That is one thing we talk about: how we receive information and what kind of information we receive based on online algorithms. It’s a really hot topic.

Light: In the chapter on that topic, you talked about micro-targeting, and you had a stat: 40 percent of people think Obama’s a Muslim. This is, in part, a testament to the potency of micro-targeting. Would you mind just talking briefly about how that works? How do algorithms contribute to 40 percent of people thinking Obama’s a Muslim?

— Cathy O'Neil

O’Neil: Let me give it to you this way: The Facebook algorithm designers chose to let us see what our friends are talking about. They chose to show us, in some sense, more of the same. And that is the design decision that they could have decided differently. They could have said, “We’re going to show you stuff that you’ve probably never seen before.” I think they probably optimized their algorithm to make the most amount of money, and that probably meant showing people stuff that they already sort of agreed with, or were more likely to agree with. But what’s happening is that they made this design decision and it described the universe according to our friends. So all of us and our friends have been cycled through this echo-chamber universe for a decade now, and it’s really changed not only what we think but what our friends think.

And that is in large part to blame for the people who think that Obama is a Muslim — they are friends with people who think that Obama is a Muslim. Or that he was born outside the United States, or whatever conspiracy theory you’re talking about. It could be on the left or the right, but the point is that this is a perfect cocktail for conspiracy theories in whatever form they are.

Then we have micro-targeting coming in. And micro-targeting is the ability for a campaign to profile you, to know much more about you than you know about it, and then to choose exactly what to show you. And because we’ve already sort of become hyper-partisan, it makes it all the more successful and efficient on the part of campaigns to be able to do this.

Light: As you’ve been on book tour, have you been hearing from the sort of people who are inside the actual Weapons of Math Destruction, inside the algorithms, working for Facebook, Google, or other organizations?

O’Neil: I have been hearing some things of the victims of WMDs, in particular the teachers. Diane Ravitch was tweeting about it. There’s been quite a few teachers who have been scored by the value-added model who have come to my talks and have told me how important it is.

I gave a talk at Google in Boston a couple of days ago, on Monday, and while I’m talking to Google engineers, none of them really addressed the extent to which they work on this stuff. So I think, I mean, Google is so big you have no idea what a given person does. I have not yet been invited by Facebook to give a talk. [laughs] Or, for that matter, by any of the data companies that I target in my book.

Light: What do you make of that?

O’Neil: I think big data companies only like good news. So I think they’re just hoping that they don’t get sued, essentially. I mean, I guess another alternative would be for them to write a piece that could explain why I’m wrong. I haven’t seen anything like that. And if you do see that kind of thing, please tell me.

Light: Near the end of your book you say there are just certain things we can’t model right now. Maybe the effectiveness of teachers is one of them. We just don’t know enough about what makes a good teacher, and how to quantify the things that make a good teacher in an algorithm. Do you think there will ever be a day when we can model things like that? Do you see it happening 50 years, 100 years down the road?

O’Neil: I think there’s inherently an issue that models will literally never be able to handle, which is that when somebody comes along with a new way of doing something that’s really excellent, the models will not recognize it. They only know how to recognize excellence when they can measure it somehow. So in that philosophical sense, the answer is you’ll never be able to really measure anything, right? Including teachers.

But on the other hand, I don’t want to go on record saying that we’ll never be able to get it better than the value-added model for teachers. First of all, it’s such a shitty model that I’m sure we can. But I also probably wouldn’t have guessed that we could have a self-driving car 10 years ago, but we can. Imagine that we have sensors in the classroom, and with those sensors we figure out when kids are responding, and when their voices are nonaggressive, and we can figure out if the teacher had everyone participate. I have no idea. The point is: There very well might be a better way of doing this, but once we have a candidate, a better way of doing this, what we absolutely must do is compare it to a ground truth and validate it and have ample evidence that it is exactly equivalent to that ground truth, so that we can replace that expensive quantitative ground truth with a data-driven given algorithm and we will not be losing valuable information.

In other words, our standards for what makes it good enough have to go way, way up. Just like the standards for what makes a good self-driving car are very high — we don’t just want it to not get into a crash in the first 100 yards, we need it to be better than a person driving it. It’s a very high standard. We can’t just throw something out there and assume it works just because it has math in it.